Marketing Automation Statistics By Revenue, Market Share, Professional Jobs, Software Growth and Facts

Updated · Dec 12, 2024

Table of Contents

- Introduction

- Editor’s Choice

- Marketing Technology Revenue

- Marketing Technology Solutions Availability

- The Marketing Budget of Companies

- Salary of Marketing Technology Executives

- Marketing Technology Spending of B2B

- Marketing Analytics Market Share

- Marketing Technology Professional Jobs

- Marketing Automation Software Growth

- The Most Common Areas Used For Automation

- Leading Marketing Automation Solution Provider

- CDP Industry Revenue

- Customer Data Vendor’s Growth

- Customer Data Platform Industry By Region

- CDP Employee Distribution

- CDP Used By Marketers

- Top SaaS Companies

- Small Businesses Concerned Over AI

- SaaS Implementation Security Concerns

- Conclusion

Introduction

Marketing Automation Statistics: Technology has become the cornerstone of strategic business growth in the rapidly evolving digital marketing landscape. Marketing automation represents a transformative approach that empowers organizations to streamline processes, personalize customer experiences, and drive more efficient marketing strategies.

By going through the Marketing Automation Statistics, we can delve into the intricate world of marketing technology, exploring key trends, market dynamics, spending patterns, and emerging technological innovations reshaping how businesses connect with their audiences in an increasingly digital marketplace on a holistic level.

Editor’s Choice

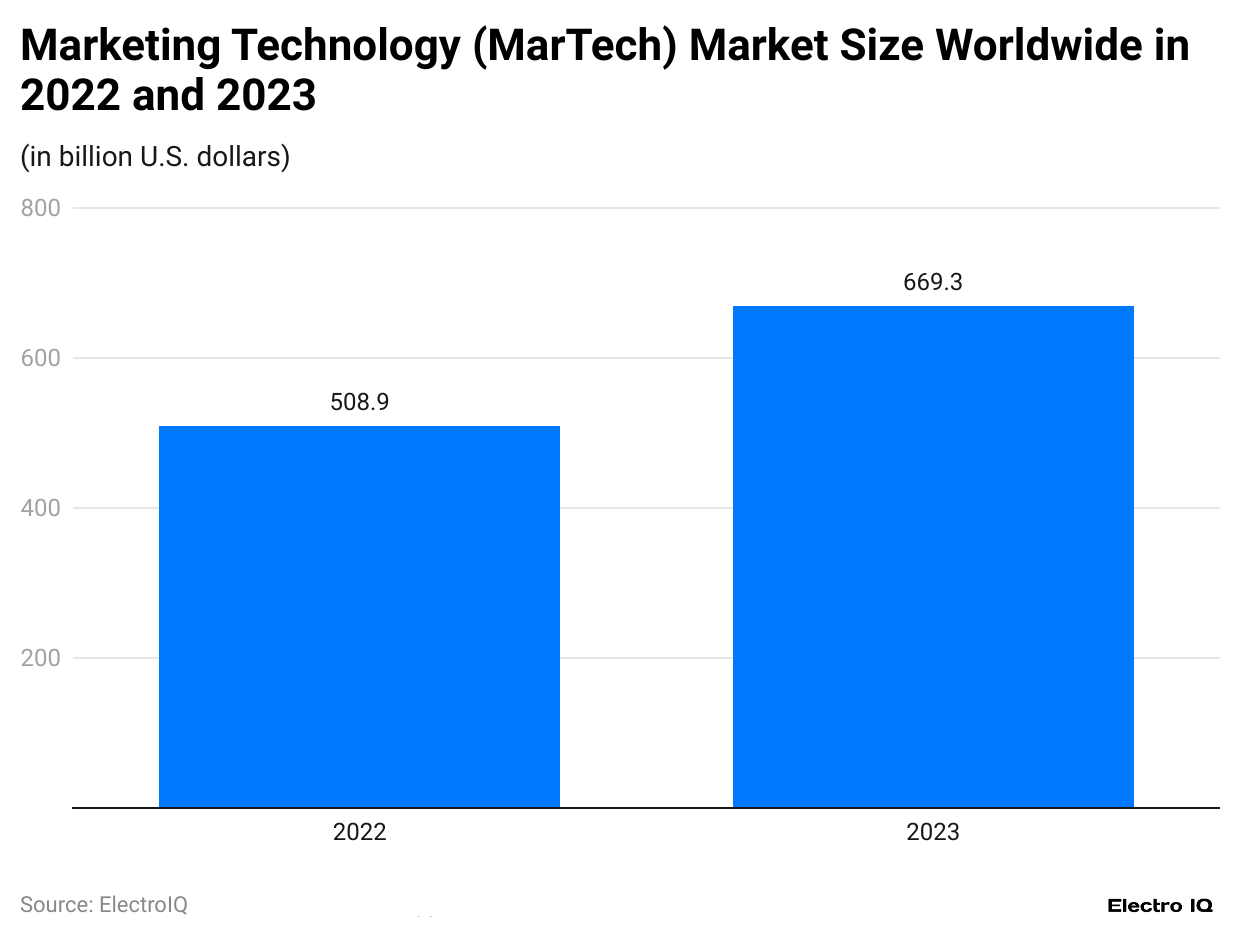

- Marketing technology market size grew from USD 508.9B in 2022 to USD 669.3 Billion in 2023

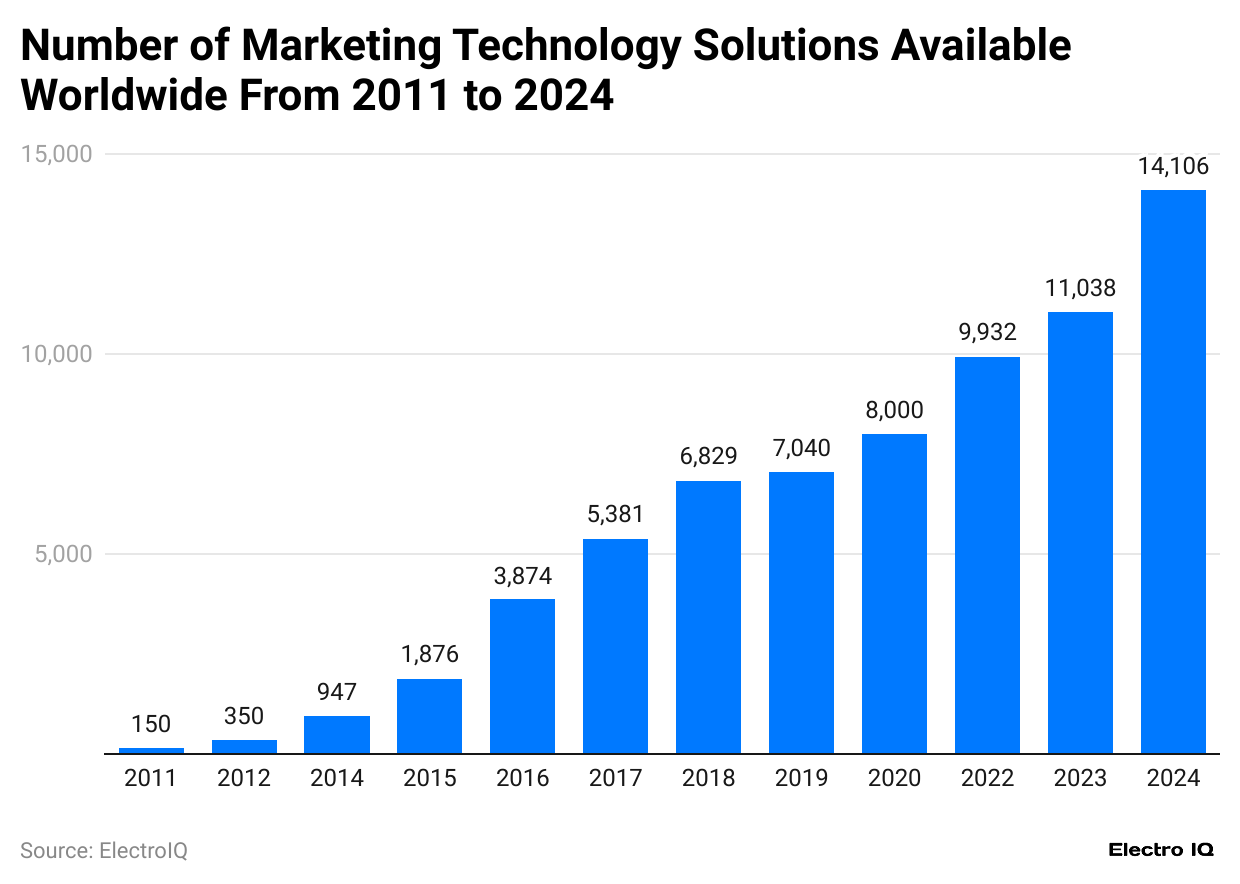

- Available marketing technology solutions increased from 150 in 2011 to 11,038 in 2023

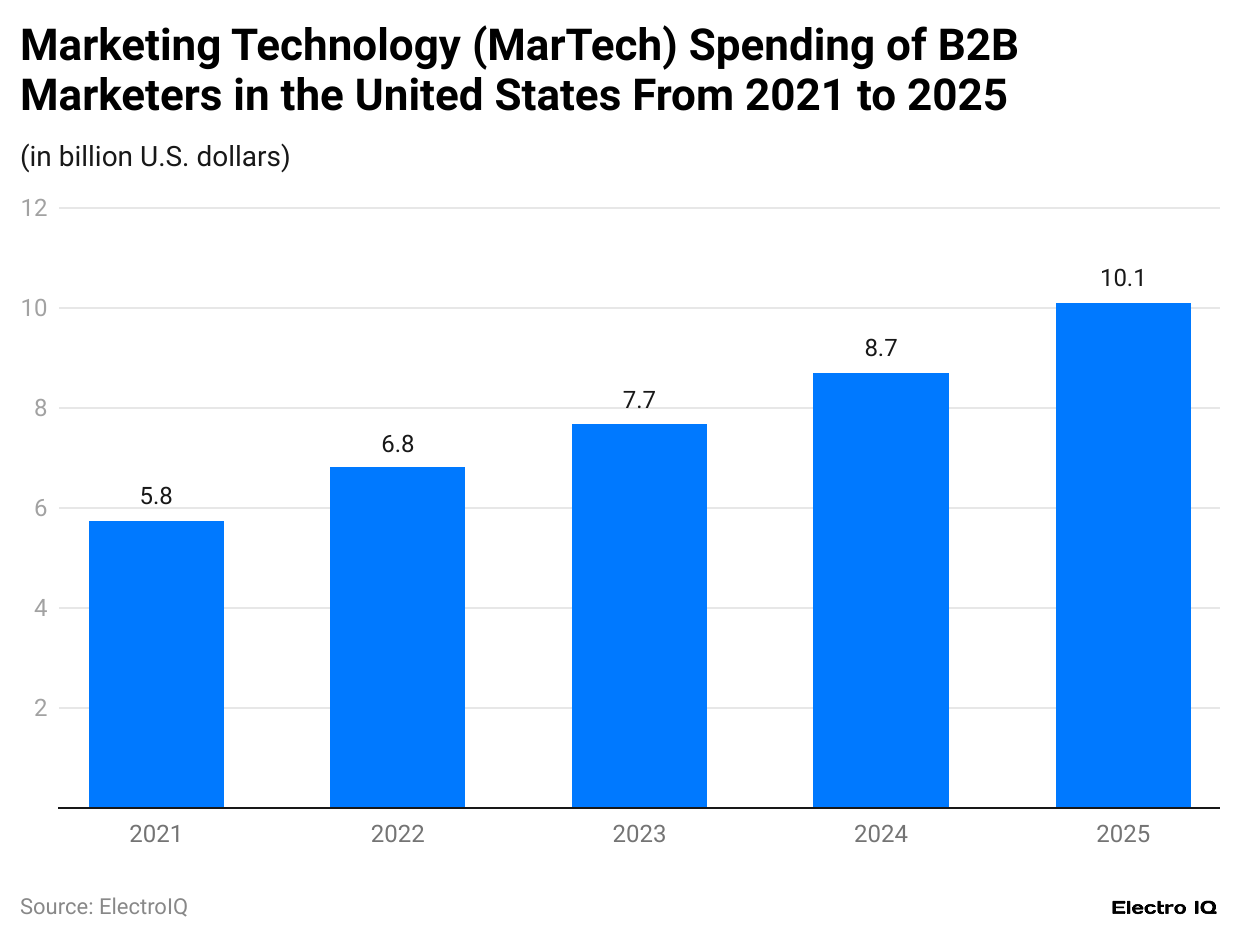

- B2B marketing technology spending is expected to reach USD 10.11 Billion by 2025

- HubSpot leads marketing automation with 34.72% market share

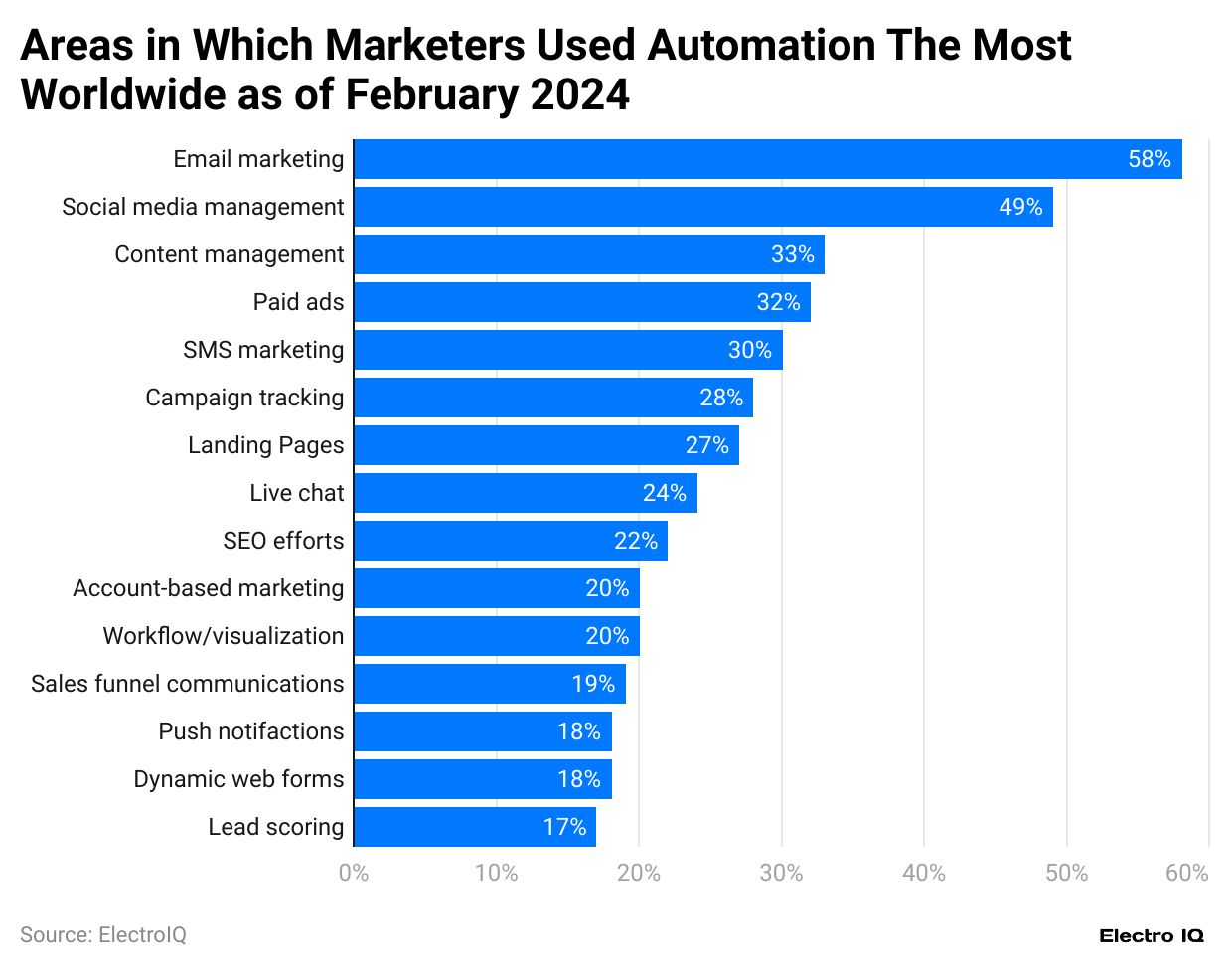

- Email marketing leads automation at 58% usage

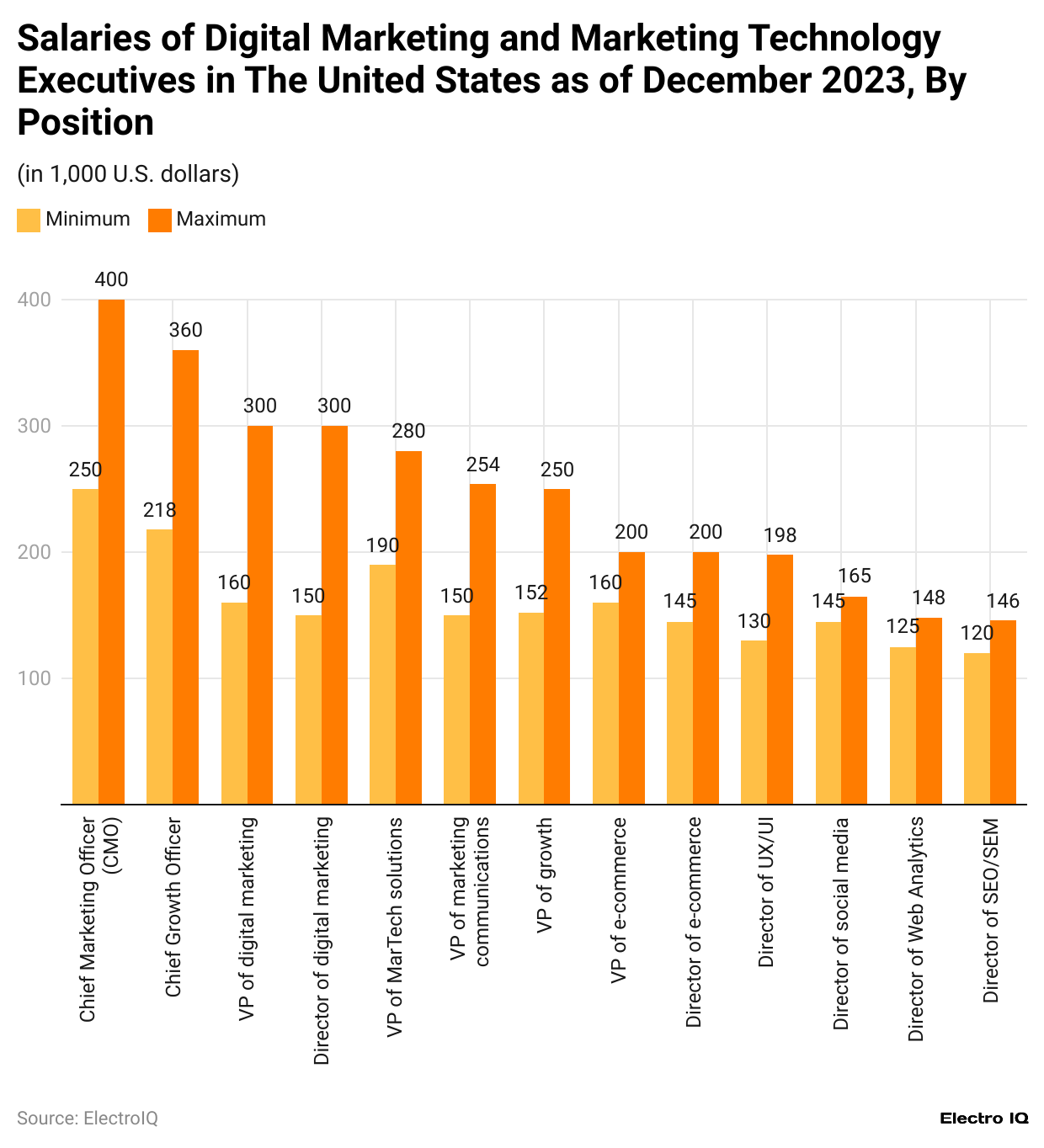

- CMO salaries range between USD 250,000 to USD 400,000

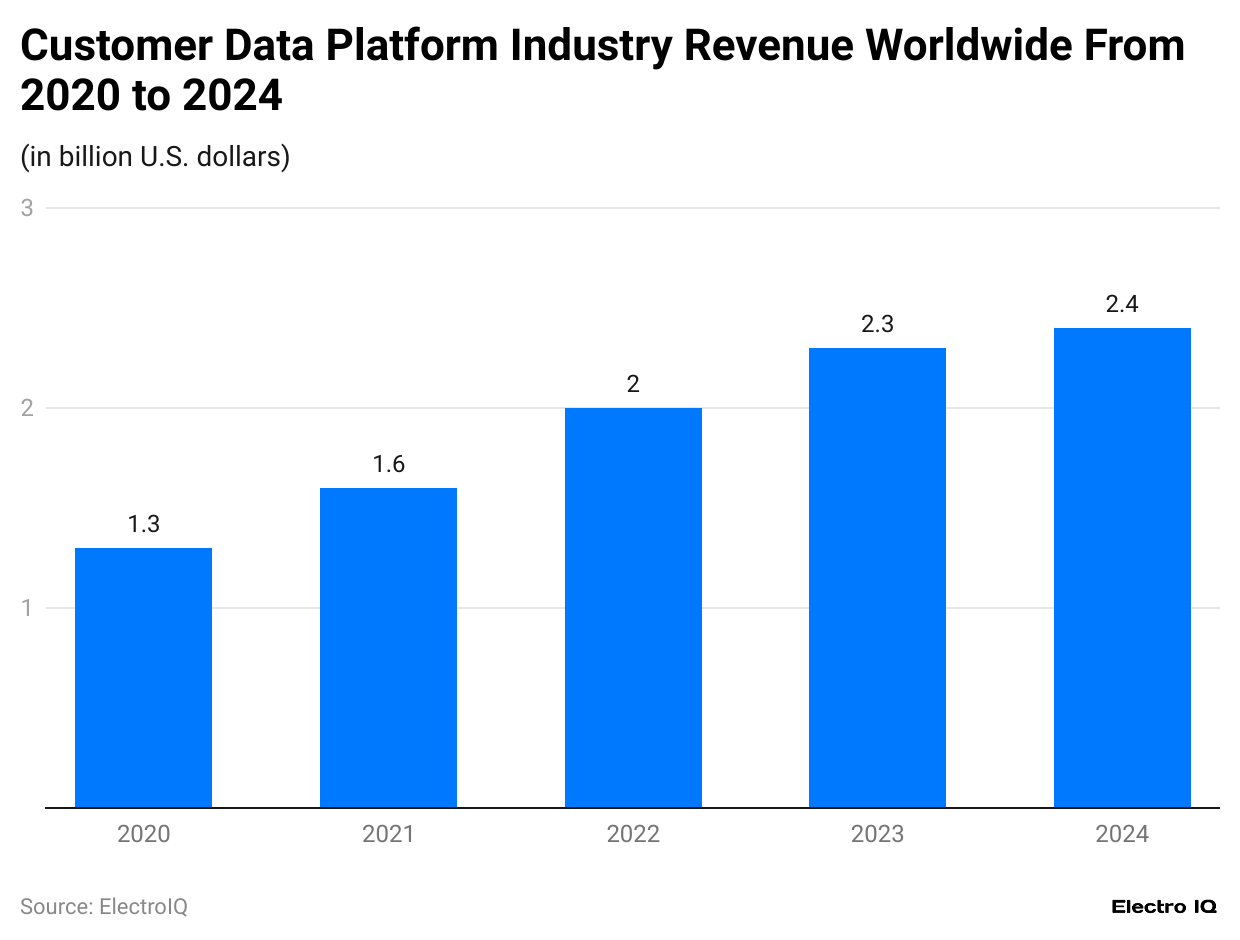

- Customer data platform industry revenue grew from USD 1.3B in 2020 to USD 2.3 Billion in 2023.

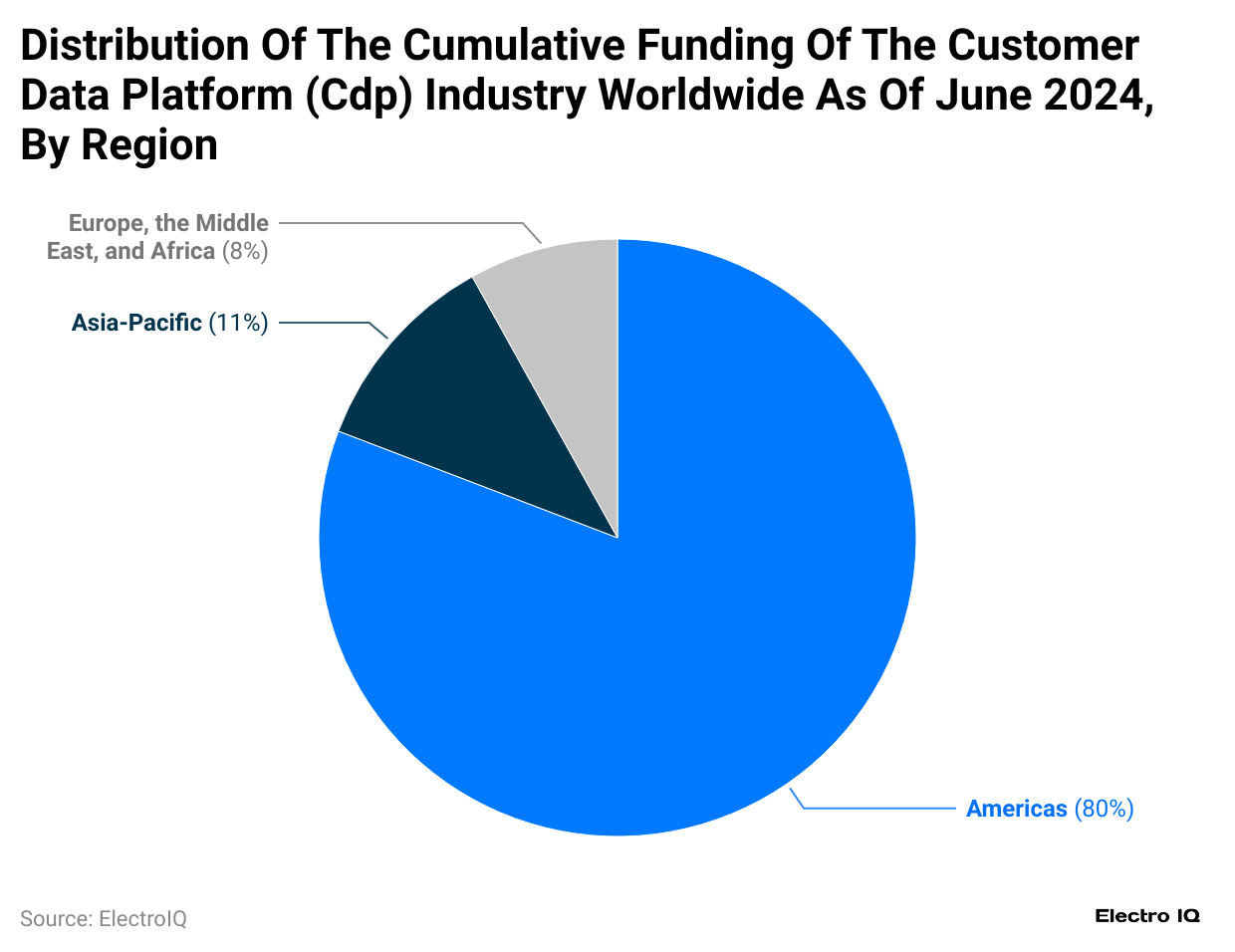

- America dominates CDP market with 80% market share.

- Salesforce tops SaaS companies with USD 310.9 Billion market cap.

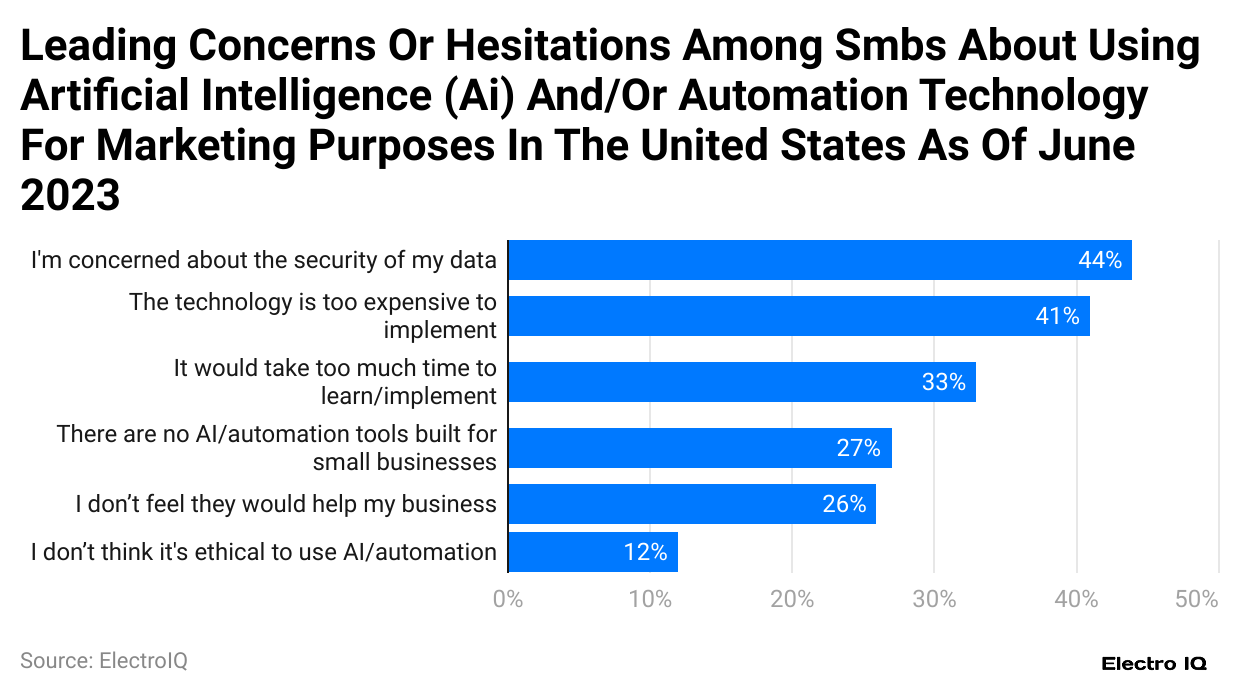

- 44% of small businesses are concerned about data security in AI

- Marketing automation software revenue predicted to reach USD 11.25 Billion by 2031.

- Social media management automation is used by 49% of marketers.

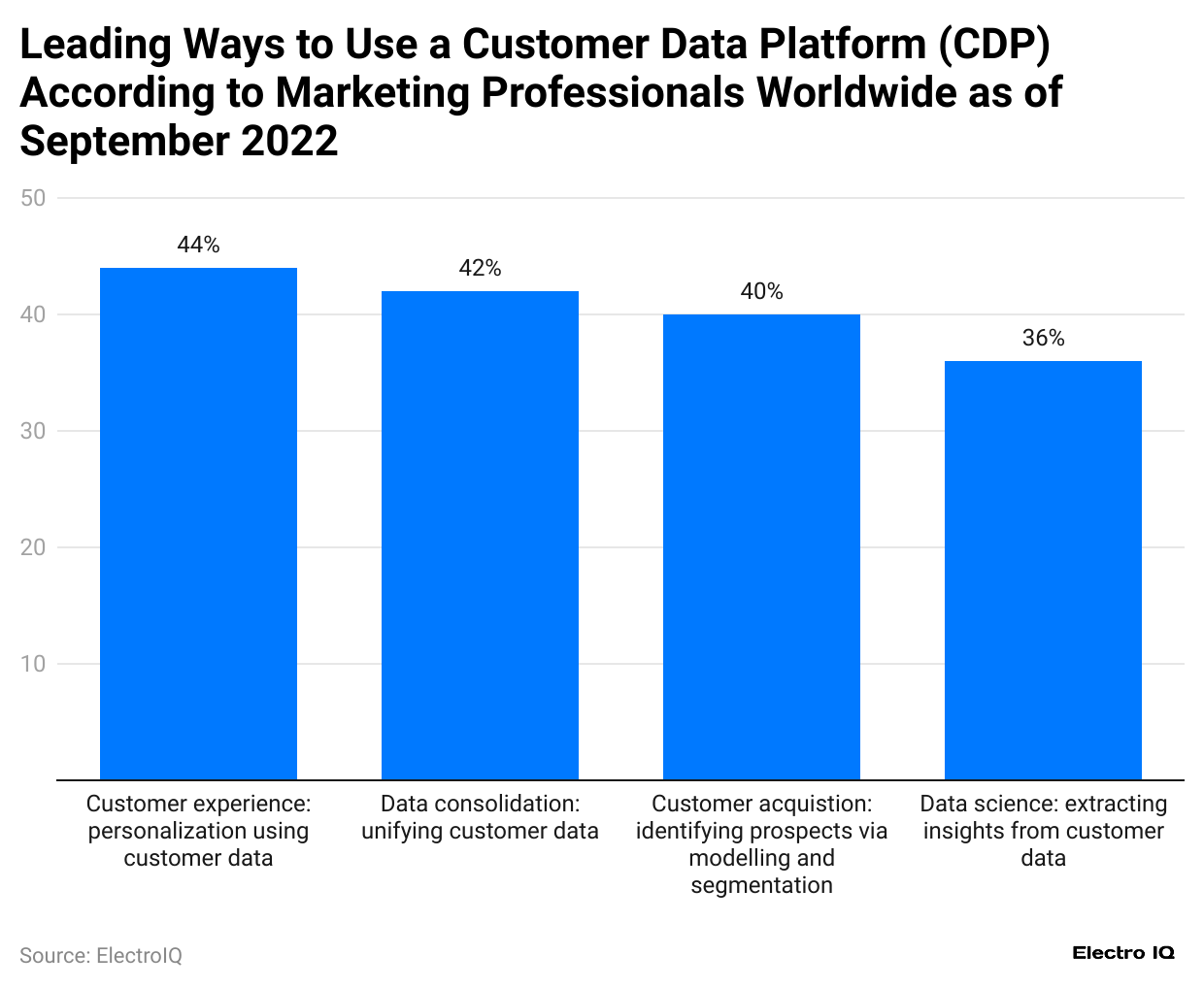

- 42% of marketers use CDPs for customer data consolidation.

- Identity theft is a major concern for 43% of SaaS application users.

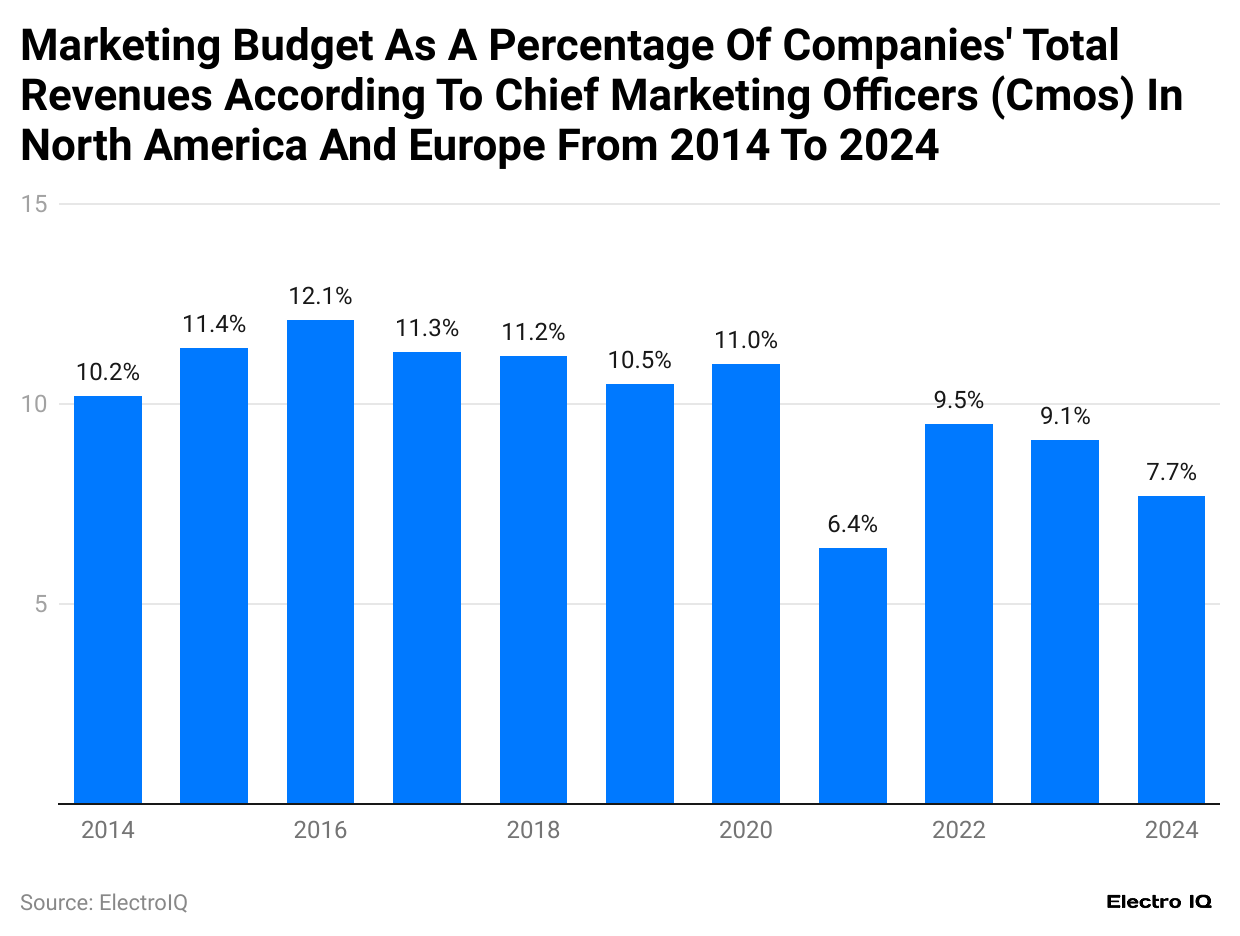

- Marketing budget growth remains steady at 9.1% in 2023.

Marketing Technology Revenue

(Reference:statista.com)

- Marketing Automation Statistics show that the marketing technology market size has increased consistently.

- In 2022, the market size was 508.9 billion USD.

- By the end of 2023, the market size had increased to 669.3 billion USD.

Marketing Technology Solutions Availability

(Reference:statista.com)

- Marketing Automation Statistics show that the number of available marketing technology solutions has increased consistently.

- In 2011, 150 marketing solutions were available.

- As of 2023, 11,038 marketing technology solutions were available.

The Marketing Budget of Companies

(Reference:statista.com)

- Marketing Automation Statistics show that companies’ marketing budgets have been increasing consistently.

- In 2014, the marketing budget growth rate was 10.2%.

- As of 2023, the market budget growth is 9.1%.

Salary of Marketing Technology Executives

(Reference:statista.com)

- Marketing Automation Statistics show that the Chief Marketing Officer (CMO) is the highest-paid marketing technologist, with a salary ranging between 250K USD to 400K USD.

- The chief growth officer has the 2nd highest salary, ranging between 218K USD to 360K USD.

- VP of digital marketing has 3rd highest salary, from 160K USD to 300K USD.

- The director of digital marketing has the 4th highest salary, ranging between 150K USD to 300K USD.

Marketing Technology Spending of B2B

(Reference:statista.com)

- Marketing Automation Statistics show that marketing technology spending among B2B marketers has consistently increased.

- In 2021, the Marketing technology spending of B2B was 5.75 billion USD.

- As of 2023, the marketing technology spending of B2B has become 7.68 billion USD.

- By the end of 2025, the marketing technology spending will be 10.11 billion USD.

(Reference:statista.com)

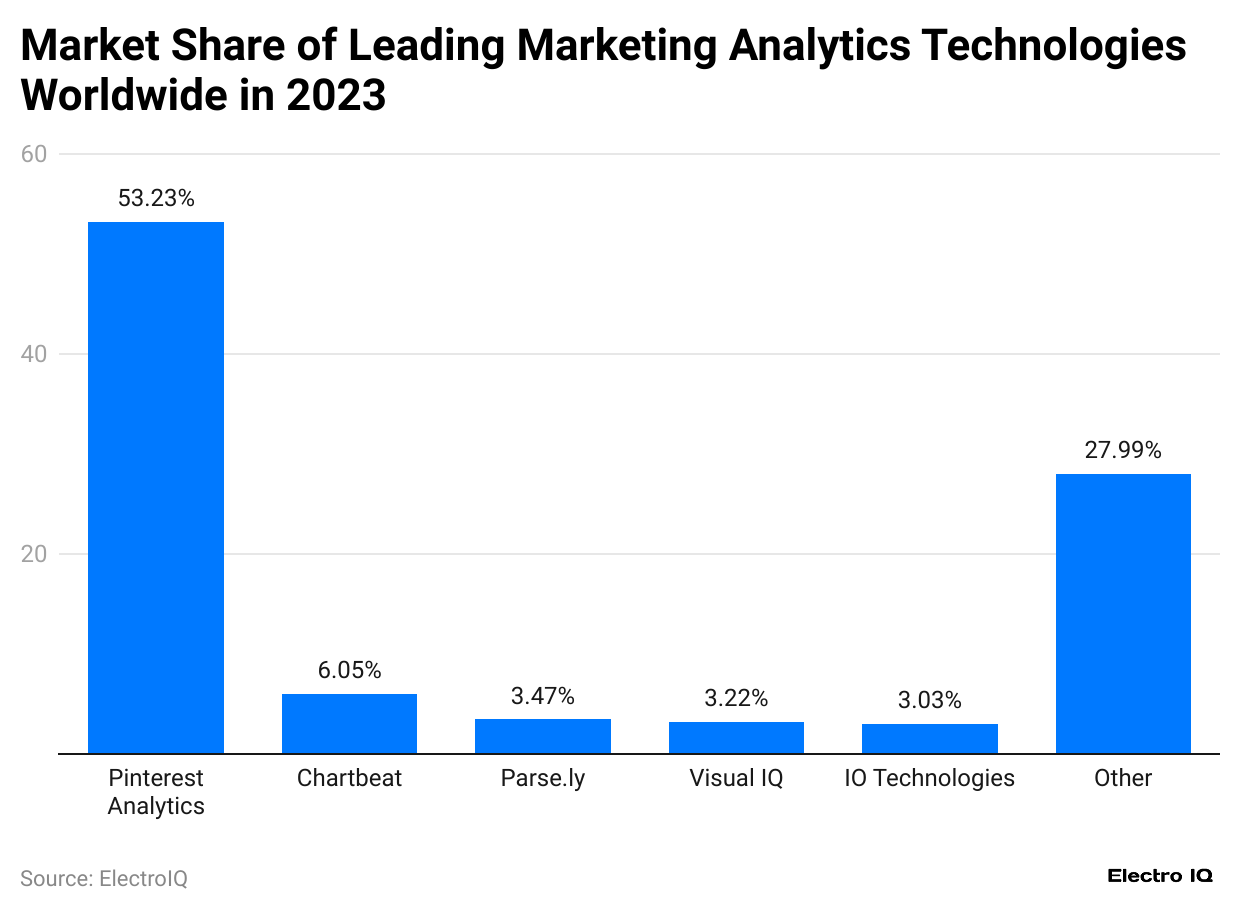

- Marketing Automation Statistics show that the market share of marketing analytics was highest at 53.23%.

- The rest of the technologies are made up of 27.99%.

- Chartbeat has a 6.05% market share among marketing analytics.

- ly has a 3.47% market share among marketing analytics technologies.

Marketing Technology Professional Jobs

(Reference:statista.com)

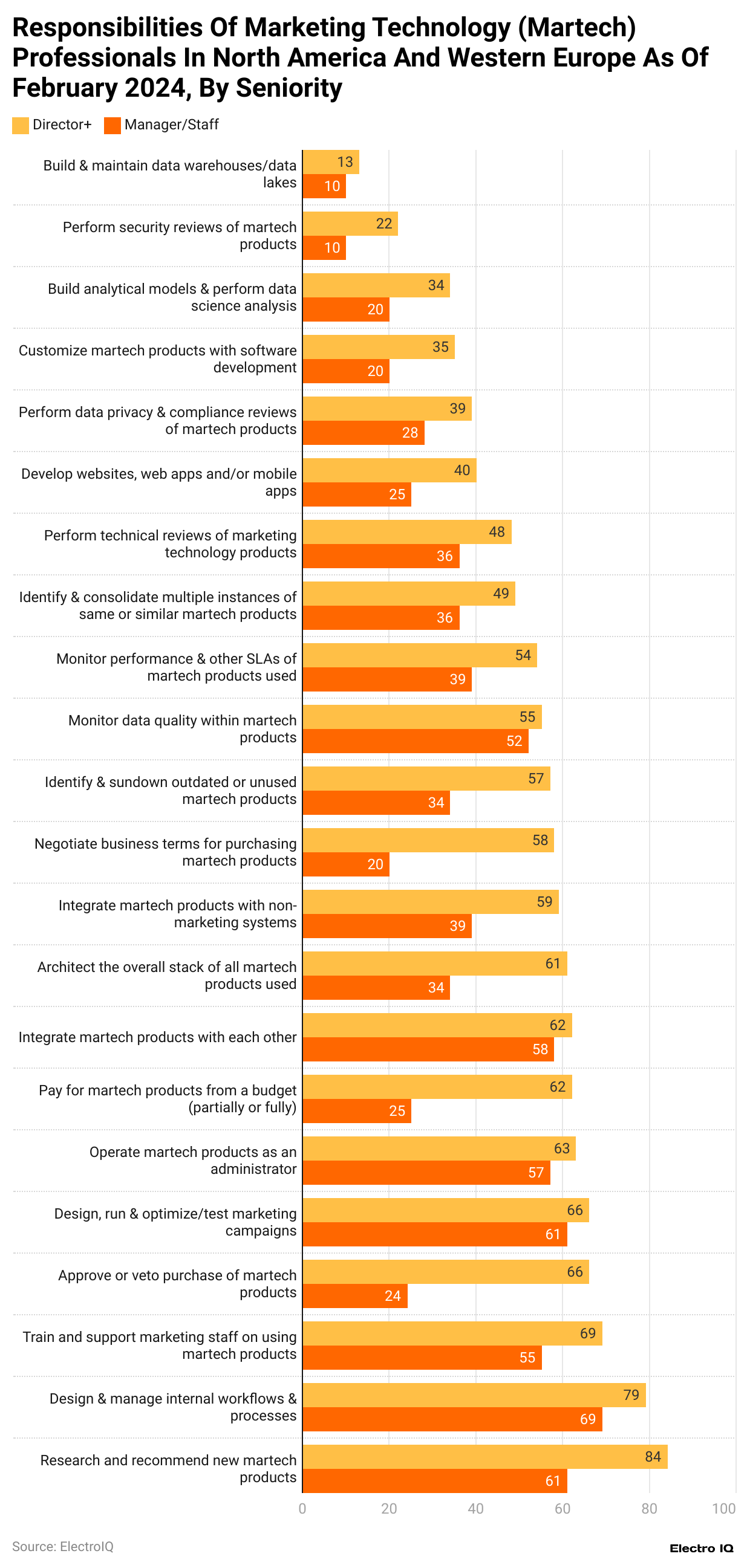

- Marketing Automation Statistics show that research recommendations for new Martech products had the director among 84%.

- Design and managing internal workflow had director among 79% of respondents.

- Training and supporting marketing staff on Martech products comprise 69% of respondents.

Marketing Automation Software Growth

(Reference:statista.com)

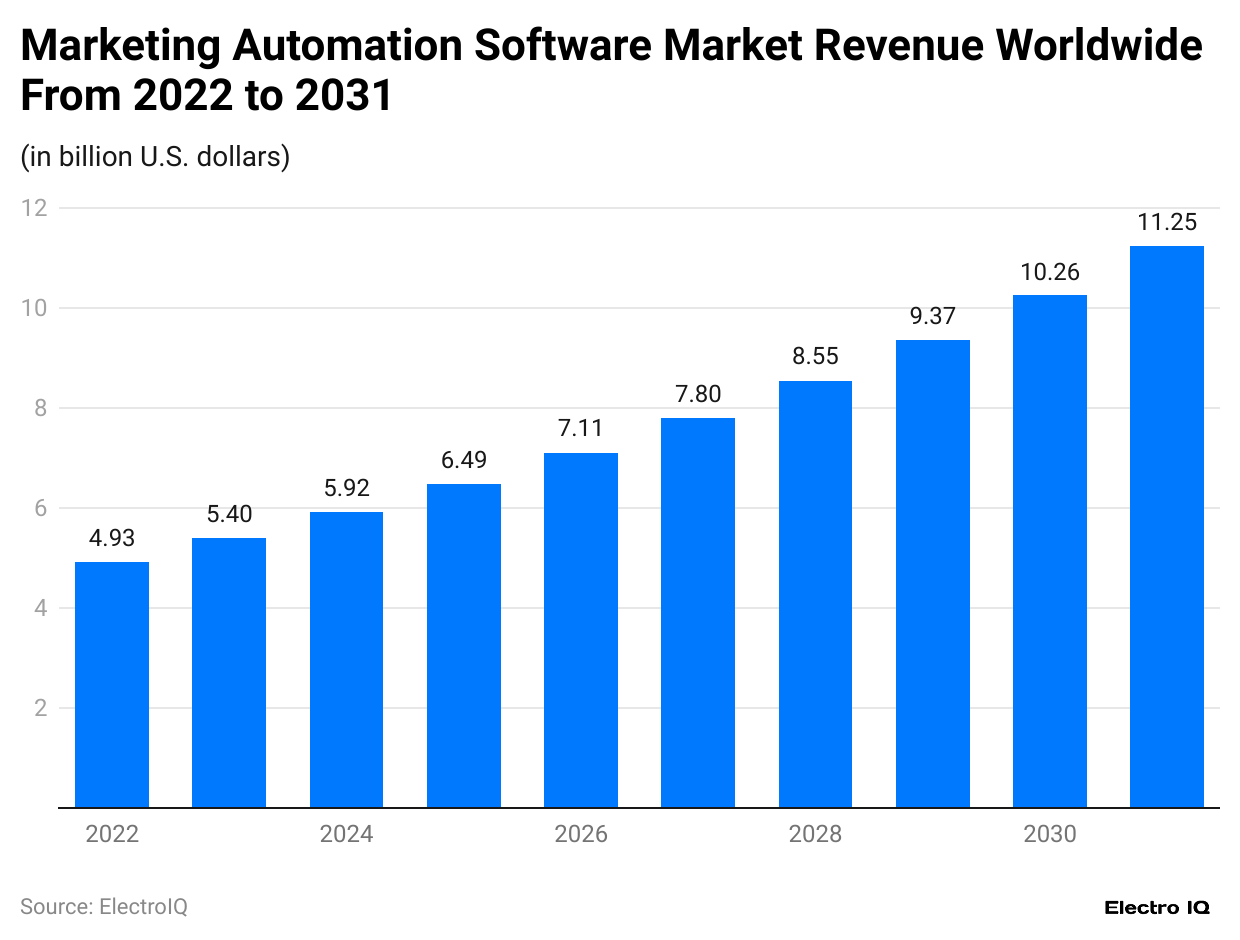

- Marketing Automation Statistics show that worldwide software market revenue has consistently increased.

- In 2022, the marketing automation software market was valued at 4.93 billion USD.

- By the end of 2023, the marketing automation software revenue was USD 5.4 billion.

- By the end of 2031, the marketing automation software market revenue is predicted to be USD 11.25 billion.

The Most Common Areas Used For Automation

(Reference:statista.com)

- Marketing Automation Statistics show that email marketing is the most popular area in which marketers use automation, according to 58% of respondents.

- Social media management is a popular area used for automation, according to 49% of respondents.

- Content management is a popular area used for automation, according to 33% of respondents.

- Paid ads are a popular area used for automation, according to 32% of respondents.

- SMS marketing is a popular area used for automation, according to 30% of respondents.

Leading Marketing Automation Solution Provider

(Reference:statista.com)

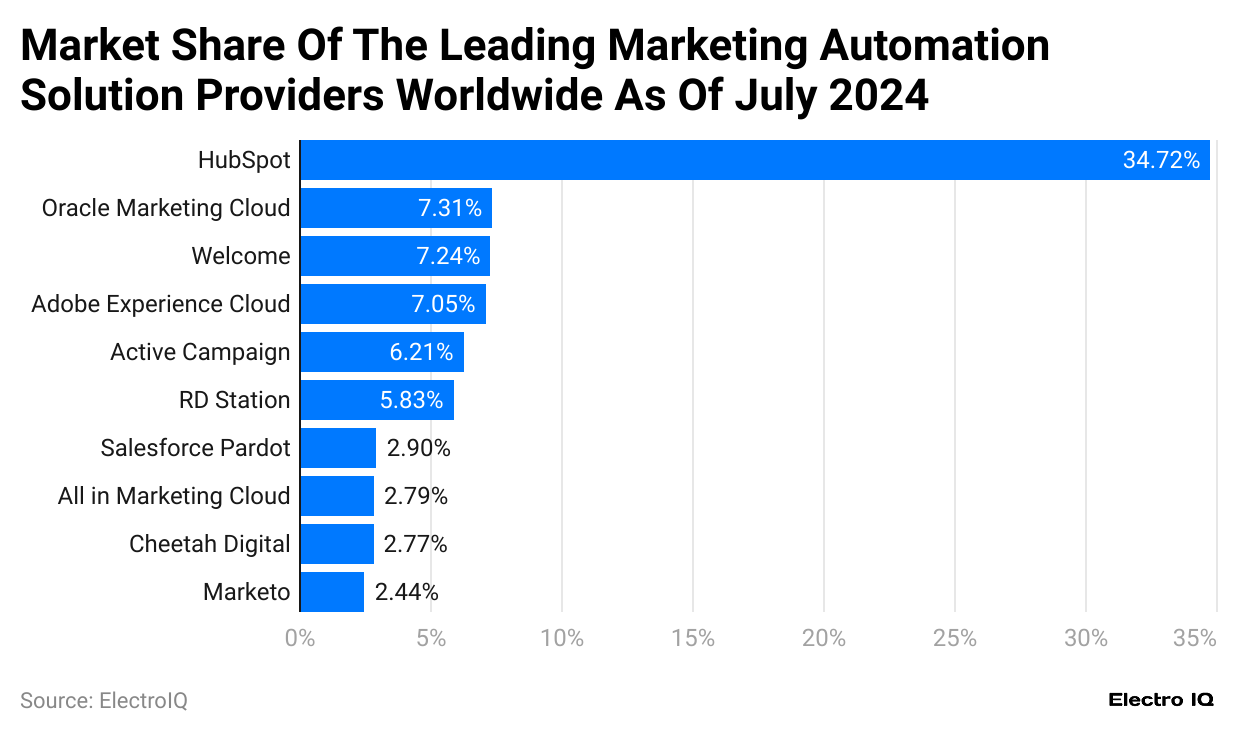

- Marketing Automation Statistics show that Hubspot is the leading marketing automation solution provider, with a market share of 34.72%.

- Oracle Marketing Cloud has 2nd highest market share among automation solution providers, with 7.31%.

- Welcome has the 3rd highest market share among automation solution providers, with 7.24%.

- Adobe Experience Cloud has the 4th highest market share among automation solution providers, with 7.05%.

- Active Campaign has the 5th highest market share among automation solution providers, with 6.21%.

- RD Station has the 6th highest market share among automation solution providers, with 5.83%.

CDP Industry Revenue

(Reference:statista.com)

- Marketing Automation Statistics show that the revenue of the customer data platform industry has been increasing consistently over time.

- In 2020, the customer data platform industry revenue was 1.3 billion USD.

- By the end of 2023, the customer data platform industry revenue had become 2.3 billion USD.

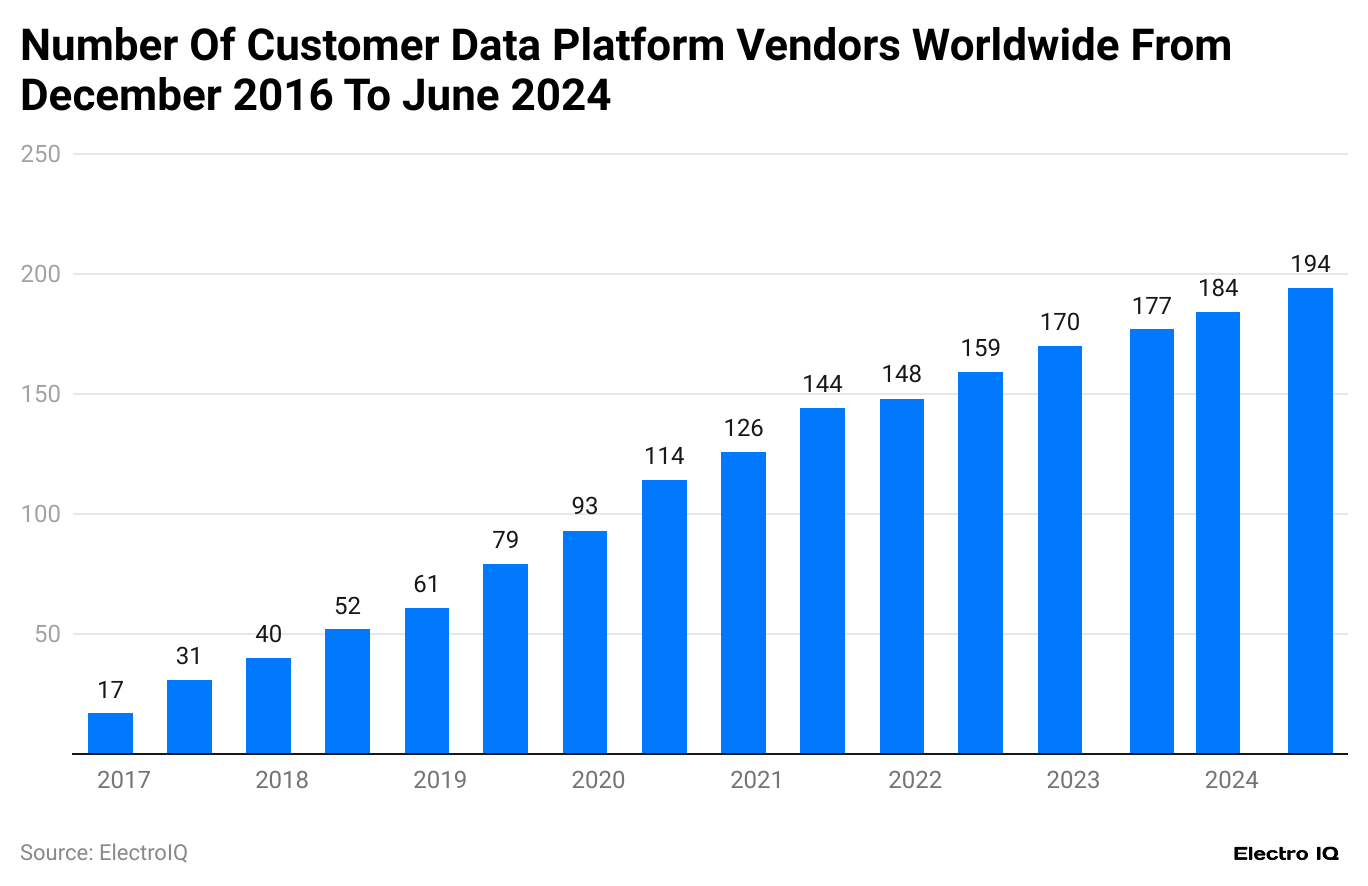

Customer Data Vendor’s Growth

(Reference:statista.com)

- Marketing Automation Statistics show that customer data platform vendors have increased consistently.

- In December 2016, the customer data vendors were 17.

- As of July 2024, their customer data vendor were 194.

Customer Data Platform Industry By Region

(Reference:statista.com)

- Marketing Automation Statistics show that America has the highest market share with 80%.

- The accumulated market share of customer data platforms in Asia is 11%.

- The accumulated market share of the customer data platforms in Europe, the Middle East, and Africa is 8%.

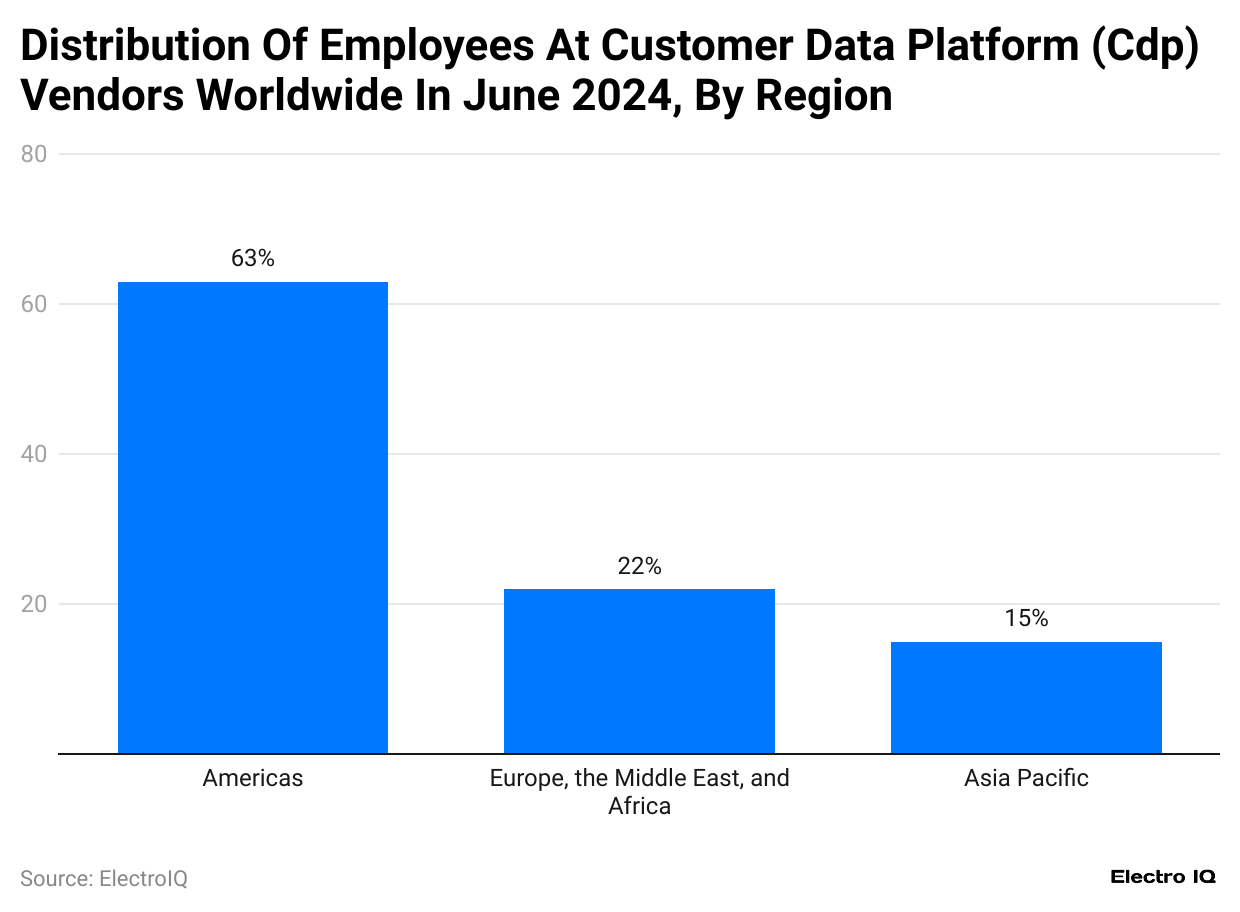

CDP Employee Distribution

(Reference:statista.com)

- Marketing Automation Statistics show that America has the largest number of employees, with a 63% share.

- Europe, the Middle East, and Africa have a 22% share of employees in the customer data platform.

- Asia Pacific makes up 15% of the total share of employees in customer data platforms.

CDP Used By Marketers

(Reference:statista.com)

- Marketing Automation Statistics show that marketing professionals use a customer data platform to personalize data.

- Marketers use consolidation of customer data, according to 42% of respondents.

- 40% of respondents use customer data platforms to identify concepts related to modeling and segmentation.

- Customer data platforms use Data science and customer data extraction, according to 36% of marketers.

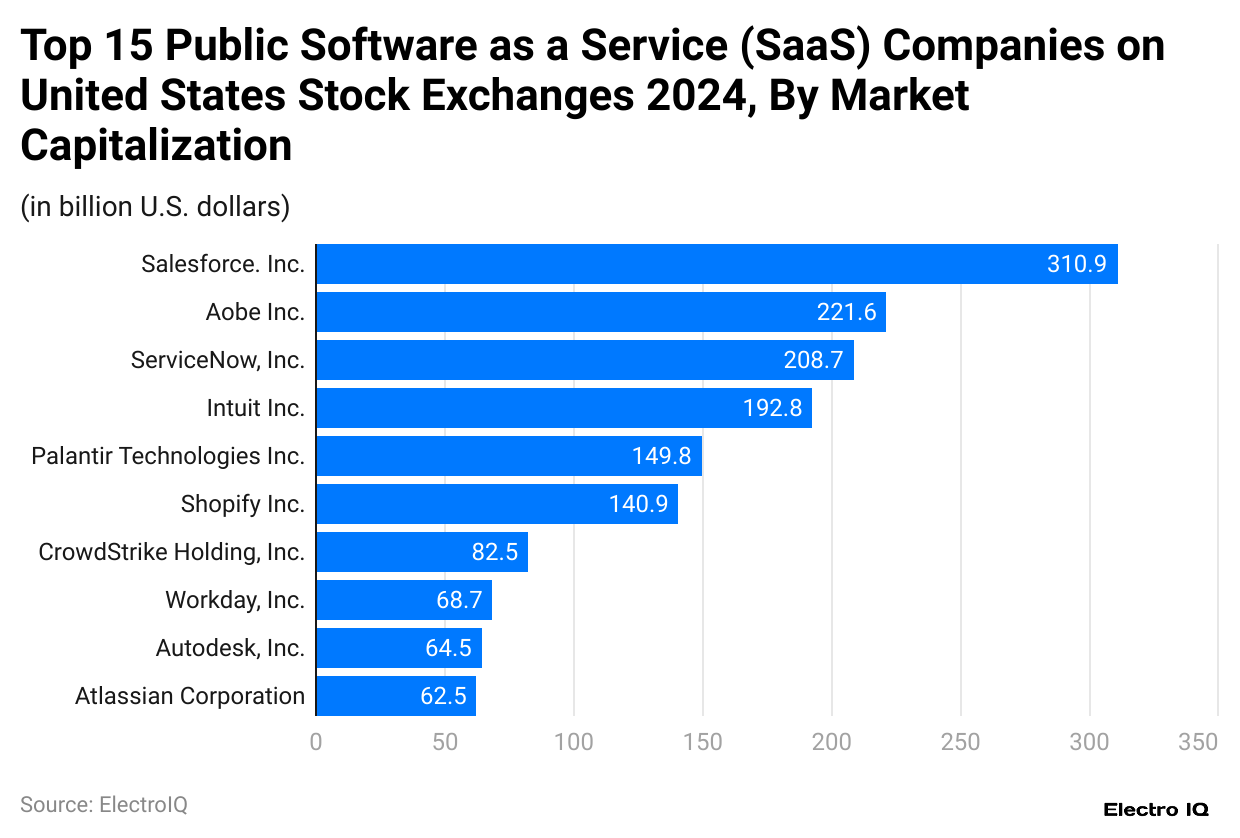

Top SaaS Companies

(Reference:statista.com)

- Marketing Automation Statistics show that Salesforce has the highest market cap, with 310.9 billion USD.

- Adobe Inc. has 2nd highest market cap of 221.6 billion USD.

- ServiceNow, Inc. has the 3rd highest market cap of 208.7 billion USD.

- Intuit Inc. has the 4th highest market cap of 192.8 billion USD.

- Palantir Technologies Inc. has the 5th highest market cap of 149.8 billion USD.

Small Businesses Concerned Over AI

(Reference:statista.com)

- Marketing Automation Statistics show that concern over data security is the most prominent concern for small and medium enterprises, according to 44% of respondents.

- For 41% of respondents, implementing technology is too expensive for small and medium enterprises.

- 33% of small and medium enterprises feel it would take much time to learn and implement AI automation technology for businesses.

- 27% of respondents feel there are no suitable AI tools to assist small and medium enterprises.

- 26% of small and medium enterprises feel there is no value addition to using AI for businesses.

- 12% of respondents have ethical concerns about using AI for marketing purposes.

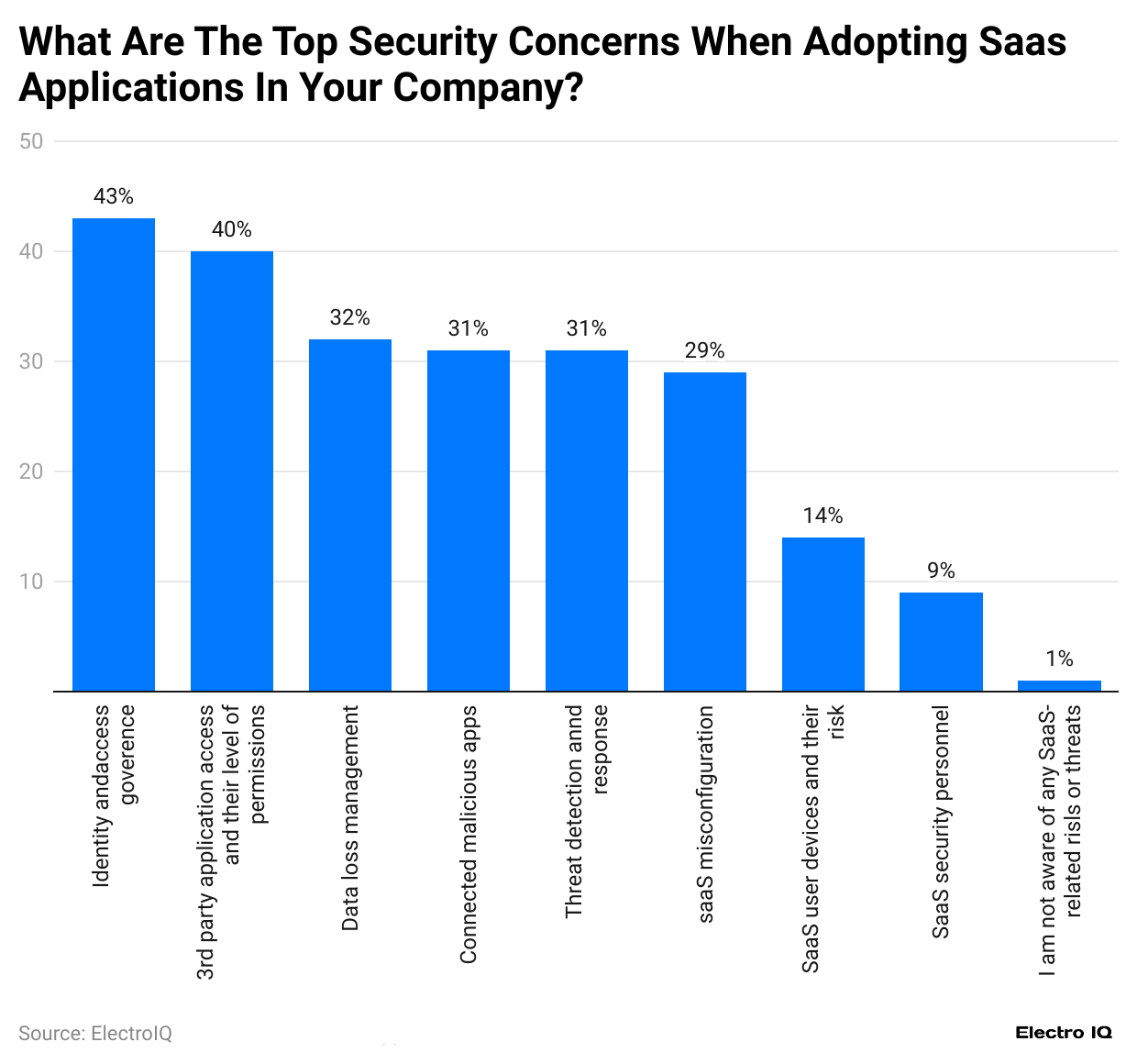

SaaS Implementation Security Concerns

(Reference:statista.com)

- Marketing Automation Statistics show that identity theft is a major concern, according to 43% of respondents using SaaS applications.

- 40% of respondents feel a major concern of using SaaS applications is the usage of 3rd party apps asking for permission.

- For 32% of respondents, data loss management is a major concern when adopting SaaS applications in the company.

Conclusion

The marketing automation landscape is profoundly transformed, driven by technological innovation, data-driven strategies, and evolving customer expectations. Marketing Automation Statistics show that as businesses increasingly recognize the value of sophisticated marketing technologies, the market continues to expand rapidly, with significant growth in solutions, spending, and strategic implementations. The future of marketing lies in intelligent automation, personalized experiences, and data-driven decision-making.

The next decade will likely see continued expansion of marketing automation technologies, with artificial intelligence, machine learning, and advanced analytics playing increasingly critical roles in shaping marketing strategies across industries.

FAQ.

Marketing automation is a technology-driven approach to managing marketing processes and campaigns more efficiently across multiple channels.

The market has consistently grown, from USD508.9 billion in 2022 to USD669.3 billion in 2023.

Email marketing is the most popular area, with 58% of marketers using it for automation.

HubSpot leads the market with a 34.72% share.

The top concerns include data security (44%) and expensive technology implementation (41%).

As of 2023, there were 11,038 marketing technology solutions available.

The revenue for marketing automation software is expected to reach USD11.25 billion by 2031.

America dominates this market with an 80% share.

49% of marketers use social media management automation.

The major concerns are identity theft (43%) and third-party app permissions (40%).

Maitrayee Dey has a background in Electrical Engineering and has worked in various technical roles before transitioning to writing. Specializing in technology and Artificial Intelligence, she has served as an Academic Research Analyst and Freelance Writer, particularly focusing on education and healthcare in Australia. Maitrayee's lifelong passions for writing and painting led her to pursue a full-time writing career. She is also the creator of a cooking YouTube channel, where she shares her culinary adventures. At Smartphone Thoughts, Maitrayee brings her expertise in technology to provide in-depth smartphone reviews and app-related statistics, making complex topics easy to understand for all readers.